Thinking...

: null}Plain text response

GenUI response

Light (default)

Dark

Welcome back

Ask about orders, billing, or product recommendations.

`"short"` variant

`"long"` variant

GPT-5.2, temperature 0. Same system prompt and user prompt for every scenario. Each format is derived from the same LLM output, not independently generated.

The LLM generates OpenUI Lang. Thesys C1 JSON is a normalized AST projection (`component` +

`props`) that drops parser metadata (`type`, `typeName`, `partial`, `__typename`). The YAML

payload and Vercel JSON-Render output are two serializations of the same json-render spec

projection (`root`, `elements`, optional `state`): JSONL emits RFC 6902 patches, while YAML is

serialized with yaml.stringify(..., \{ indent: 2 }).

All formats measured with tiktoken using the gpt-5 model encoder —

the same tokenizer family as GPT-5.2. Whitespace and formatting is included as-is in the

count. For YAML, the benchmark counts the document payload only and excludes the outer

yaml-spec fence.

Assumes constant throughput (60 tok/s). Real latency also depends on TTFT and network. Streaming advantage is most visible for the last element to render, not just overall time.

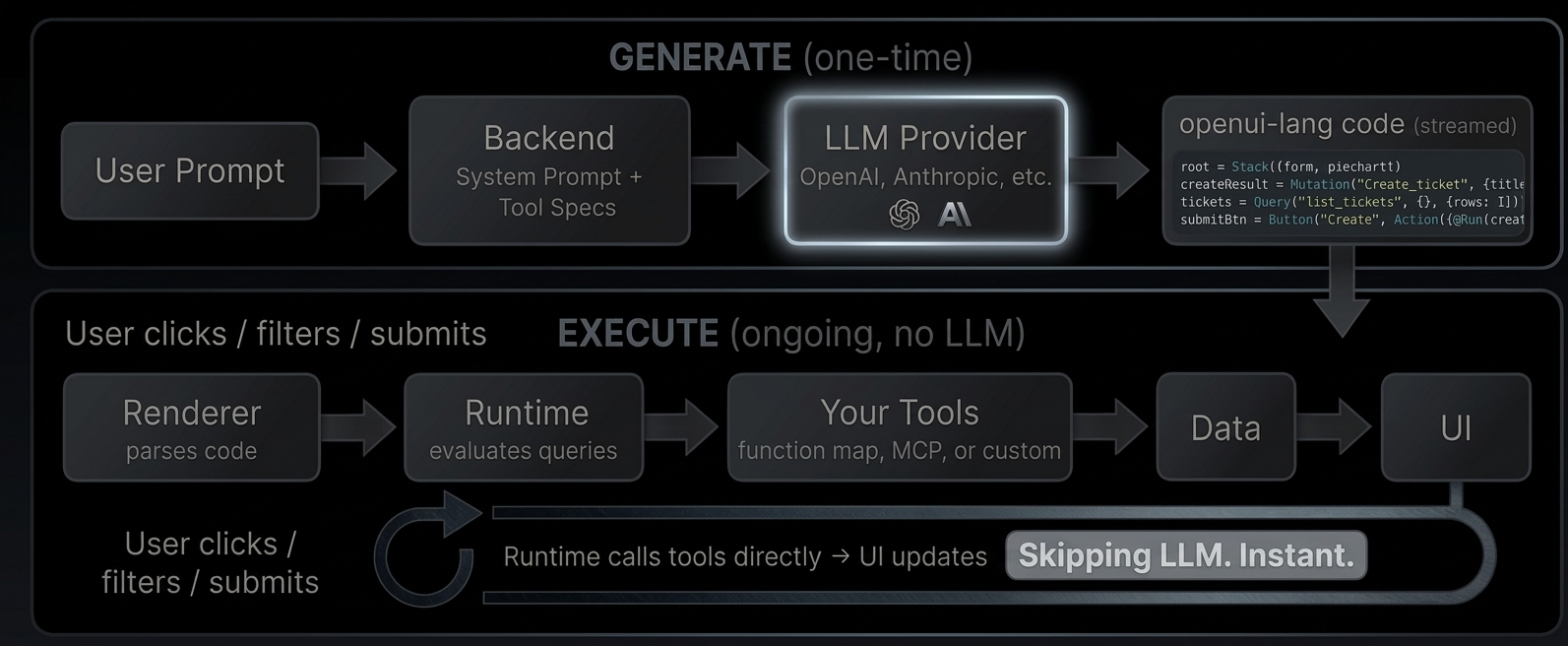

Generate [#generate]

The user describes what they want. Your backend sends the request to an LLM along with a system prompt that includes your component library and tool descriptions. The LLM responds with openui-lang code, a compact declarative format that describes the UI layout, data sources, and interactions.

Execute [#execute]

The Renderer parses the generated code. When it encounters a `Query("list_tickets")`, the runtime calls your tool directly, no LLM roundtrip. When the user clicks a button that triggers `@Run(createResult)`, the runtime executes the mutation against your tool. When a `$variable` changes from a dropdown, all dependent queries re-fetch automatically.

The LLM generated the wiring. The runtime executes it.

What this enables [#what-this-enables]

* **Reactive dashboards** with date range filters, auto-refresh, and live KPIs computed from query results

* **CRUD interfaces** with create forms, edit modals, tables with search and sort

* **Monitoring tools** with periodic refresh, server health metrics, and error rate tracking

* **Any tool-connected UI.** If you can expose it as a tool (via [MCP](https://modelcontextprotocol.io/docs/getting-started/intro) or function map), the LLM can wire it into a UI

Try it live: [Open the GitHub Demo](/demo/github)

Iterate and refine [#iterate-and-refine]

The LLM doesn't have to get it right the first time. With [incremental editing](/docs/openui-lang/incremental-editing), the user says "add a pie chart" and the LLM outputs only the 2-3 changed statements and the parser merges them into the existing code. Existing queries, state, and bindings stay intact.

```

Turn 1: Turn 2 (patch only):

root = Stack([header, tbl]) root = Stack([header, chart, tbl]) ← updated

header = CardHeader("Tickets") chart = PieChart(["Open","Closed"], ← new

tickets = Query(...) [@Count(@Filter(..., "open")),

tbl = Table([...]) @Count(@Filter(..., "closed"))

], "donut")

20 lines, ~400 tokens 3 lines, ~60 tokens (85% fewer)

```

Connecting tools [#connecting-tools]

The Renderer accepts a `toolProvider` prop that tells the runtime how to call your tools. Two options:

**Function map**, a plain object of async functions:

```tsx

Generate [#generate]

The user describes what they want. Your backend sends the request to an LLM along with a system prompt that includes your component library and tool descriptions. The LLM responds with openui-lang code, a compact declarative format that describes the UI layout, data sources, and interactions.

Execute [#execute]

The Renderer parses the generated code. When it encounters a `Query("list_tickets")`, the runtime calls your tool directly, no LLM roundtrip. When the user clicks a button that triggers `@Run(createResult)`, the runtime executes the mutation against your tool. When a `$variable` changes from a dropdown, all dependent queries re-fetch automatically.

The LLM generated the wiring. The runtime executes it.

What this enables [#what-this-enables]

* **Reactive dashboards** with date range filters, auto-refresh, and live KPIs computed from query results

* **CRUD interfaces** with create forms, edit modals, tables with search and sort

* **Monitoring tools** with periodic refresh, server health metrics, and error rate tracking

* **Any tool-connected UI.** If you can expose it as a tool (via [MCP](https://modelcontextprotocol.io/docs/getting-started/intro) or function map), the LLM can wire it into a UI

Try it live: [Open the GitHub Demo](/demo/github)

Iterate and refine [#iterate-and-refine]

The LLM doesn't have to get it right the first time. With [incremental editing](/docs/openui-lang/incremental-editing), the user says "add a pie chart" and the LLM outputs only the 2-3 changed statements and the parser merges them into the existing code. Existing queries, state, and bindings stay intact.

```

Turn 1: Turn 2 (patch only):

root = Stack([header, tbl]) root = Stack([header, chart, tbl]) ← updated

header = CardHeader("Tickets") chart = PieChart(["Open","Closed"], ← new

tickets = Query(...) [@Count(@Filter(..., "open")),

tbl = Table([...]) @Count(@Filter(..., "closed"))

], "donut")

20 lines, ~400 tokens 3 lines, ~60 tokens (85% fewer)

```

Connecting tools [#connecting-tools]

The Renderer accepts a `toolProvider` prop that tells the runtime how to call your tools. Two options:

**Function map**, a plain object of async functions:

```tsx

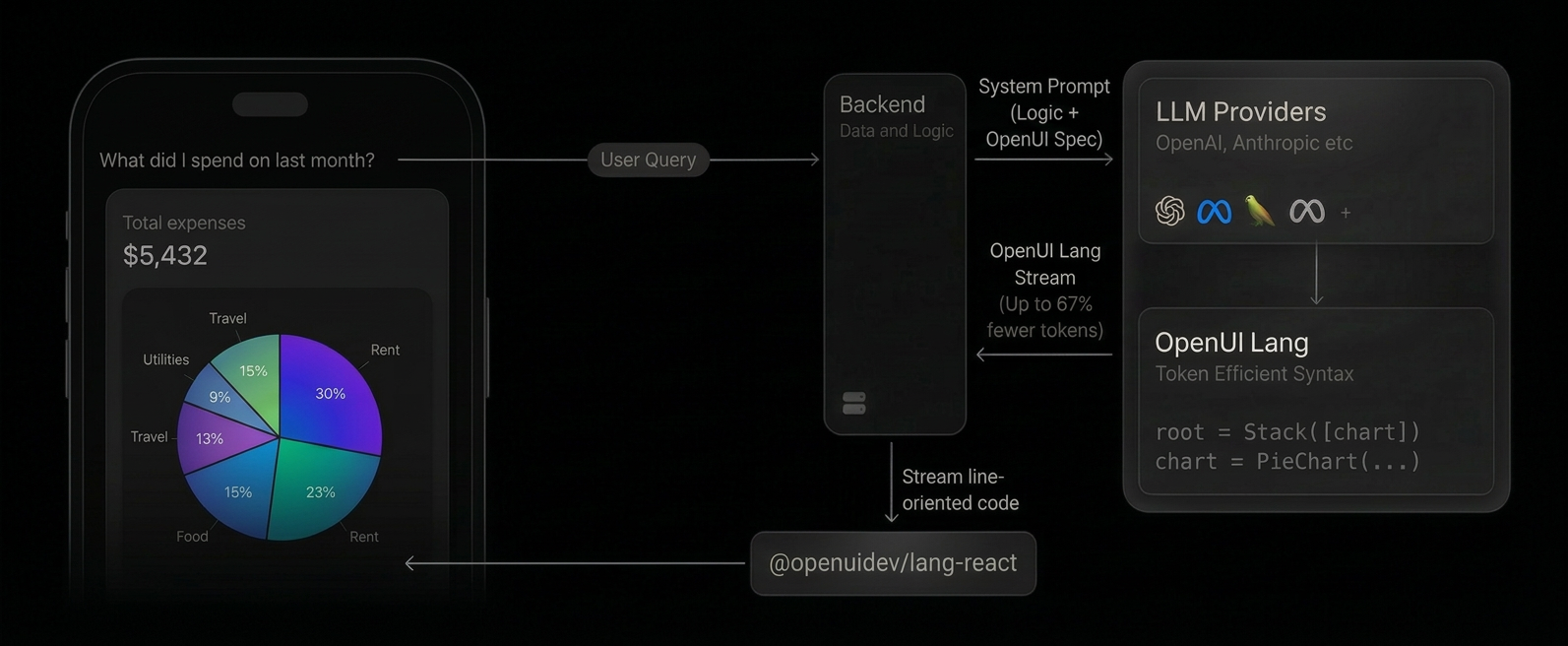

1. **System prompt includes OpenUI Lang spec**: Your backend appends the generated component library prompt alongside your system prompt, instructing the LLM to respond in OpenUI Lang instead of plain text or JSON.

2. **LLM generates OpenUI Lang**: Instead of returning markdown, the model outputs a compact, line-oriented syntax (e.g., `root = Stack([chart])`) constrained to your component library.

3. **Streaming render**: On the client, the `

1. **System prompt includes OpenUI Lang spec**: Your backend appends the generated component library prompt alongside your system prompt, instructing the LLM to respond in OpenUI Lang instead of plain text or JSON.

2. **LLM generates OpenUI Lang**: Instead of returning markdown, the model outputs a compact, line-oriented syntax (e.g., `root = Stack([chart])`) constrained to your component library.

3. **Streaming render**: On the client, the ` OpenUI Lang connects to your backend through **tools**. A tool is any function your server

exposes (a database query, an API call, a calculation). The LLM generates `Query` and `Mutation`

statements that call these tools. The runtime executes them directly and feeds the results into your UI.

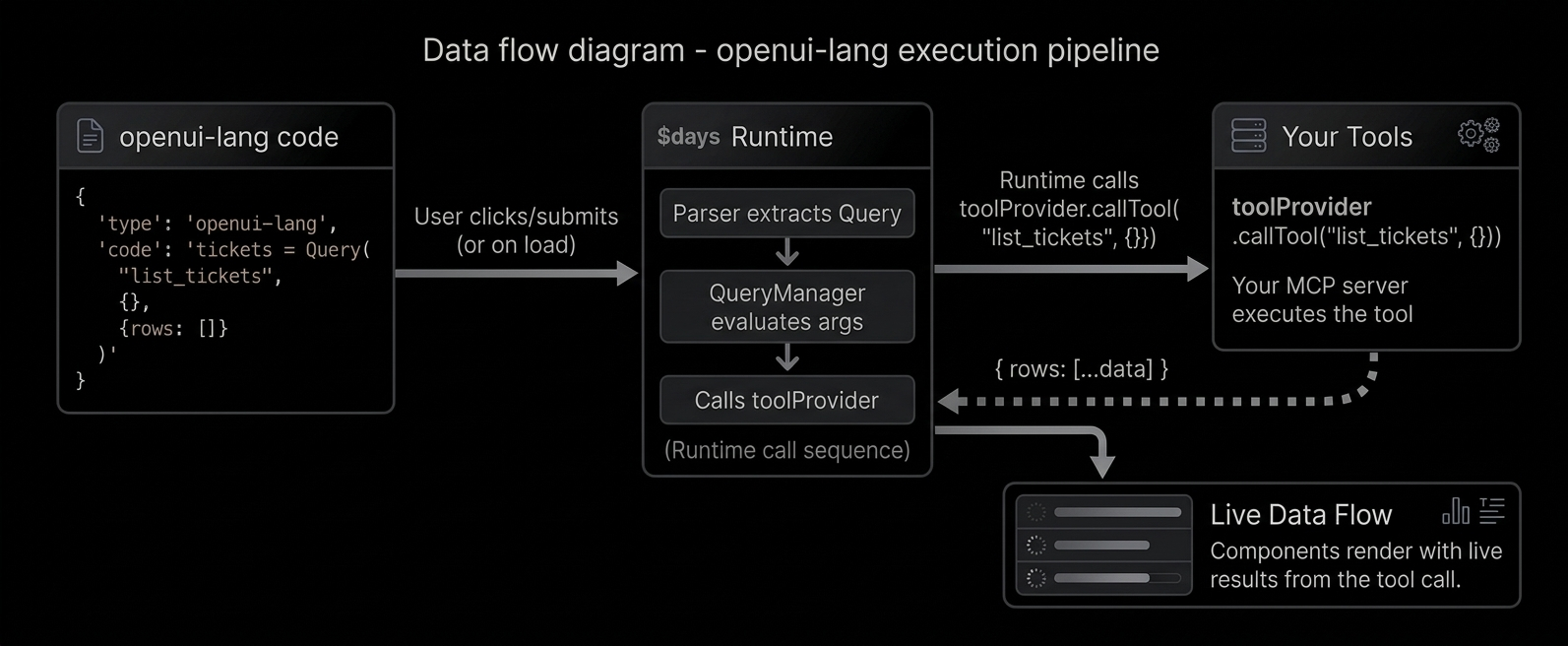

Reading data with Query [#reading-data-with-query]

```text

data = Query("list_tickets", {}, {rows: []})

```

What each argument means:

1. `"list_tickets"` - the tool name (matches what your server exposes)

2. `{}` - arguments to pass to the tool

3. `{rows: []}` - default value (what renders before the tool responds)

The default value is important - it lets the UI render immediately while data loads.

Using Query results [#using-query-results]

Query results are just data. Access fields with dot notation:

```text

tbl = Table([Col("Title", data.rows.title), Col("Status", data.rows.status)])

chart = LineChart(data.rows.day, [Series("Views", data.rows.views)])

```

`data.rows.title` extracts the `title` field from every row - like a column pluck.

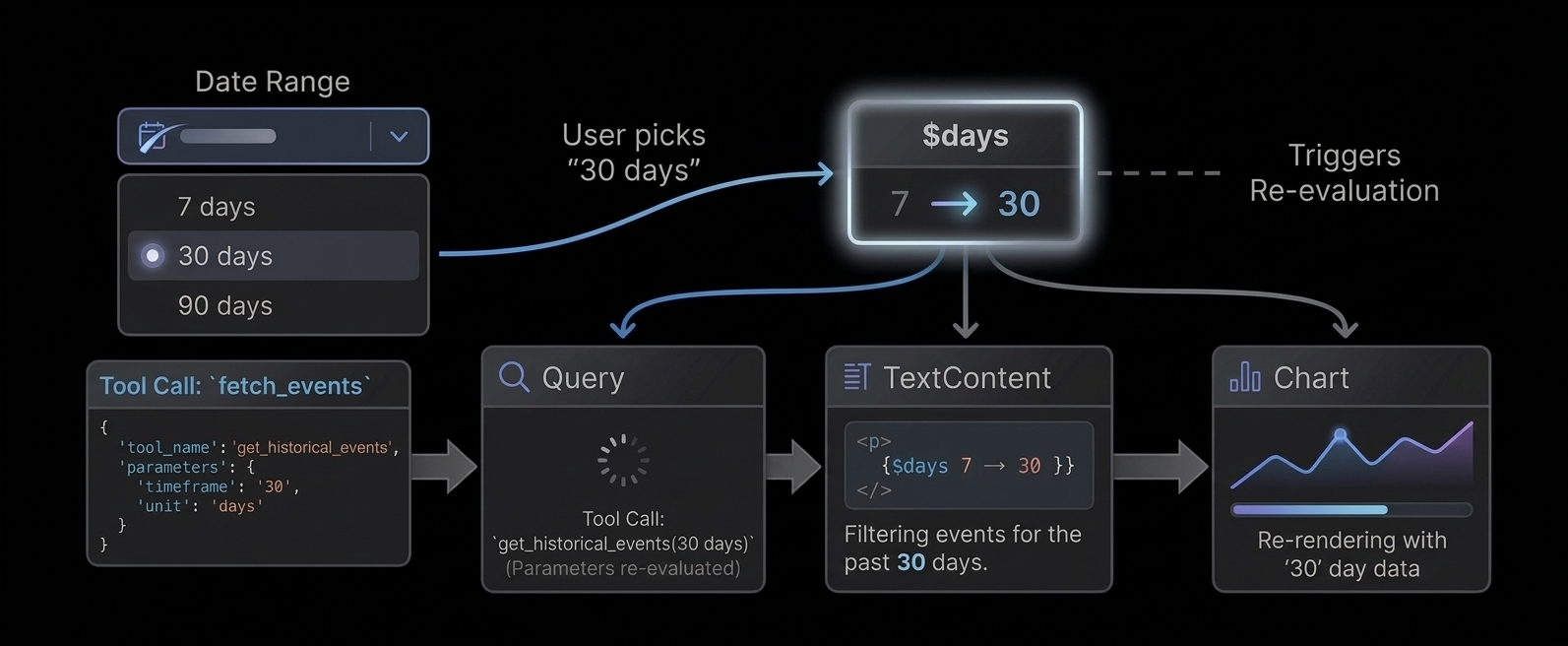

Reactive queries [#reactive-queries]

Pass a `$variable` in the query args - the query re-runs when the variable changes:

```text

$days = "7"

data = Query("analytics", {days: $days}, {rows: []})

filter = Select("days", $days, [SelectItem("7", "7 days"), SelectItem("30", "30 days")])

```

User picks "30" → `$days` updates → Query re-fetches with `{days: "30"}` → chart updates.

Auto-refresh [#auto-refresh]

Add a fourth argument for periodic refresh (in seconds):

```text

health = Query("get_server_health", {}, {cpu: 0, memory: 0}, 30)

```

This re-fetches every 30 seconds - great for monitoring dashboards.

Writing data with Mutation [#writing-data-with-mutation]

```text

createResult = Mutation("create_ticket", {title: $title, priority: $priority})

```

Mutations are NOT executed on page load. They only run when triggered by `@Run`:

```text

submitBtn = Button("Create", Action([@Run(createResult), @Run(tickets), @Reset($title)]))

```

This button does three things in order:

1. `@Run(createResult)` - executes the mutation (creates the ticket)

2. `@Run(tickets)` - re-fetches the tickets query (refreshes the table)

3. `@Reset($title)` - clears the form

Error handling [#error-handling]

Check `result.status` for mutation feedback:

```text

createResult.status == "error" ? Callout("error", "Failed", createResult.error) : null

createResult.status == "success" ? Callout("success", "Created", "Ticket added.") : null

```

How tools connect to the runtime [#how-tools-connect-to-the-runtime]

The Renderer accepts a `toolProvider` prop that handles tool execution. Pass a function map or an [MCP](https://modelcontextprotocol.io/docs/getting-started/intro) client:

```tsx

// Function map

OpenUI Lang connects to your backend through **tools**. A tool is any function your server

exposes (a database query, an API call, a calculation). The LLM generates `Query` and `Mutation`

statements that call these tools. The runtime executes them directly and feeds the results into your UI.

Reading data with Query [#reading-data-with-query]

```text

data = Query("list_tickets", {}, {rows: []})

```

What each argument means:

1. `"list_tickets"` - the tool name (matches what your server exposes)

2. `{}` - arguments to pass to the tool

3. `{rows: []}` - default value (what renders before the tool responds)

The default value is important - it lets the UI render immediately while data loads.

Using Query results [#using-query-results]

Query results are just data. Access fields with dot notation:

```text

tbl = Table([Col("Title", data.rows.title), Col("Status", data.rows.status)])

chart = LineChart(data.rows.day, [Series("Views", data.rows.views)])

```

`data.rows.title` extracts the `title` field from every row - like a column pluck.

Reactive queries [#reactive-queries]

Pass a `$variable` in the query args - the query re-runs when the variable changes:

```text

$days = "7"

data = Query("analytics", {days: $days}, {rows: []})

filter = Select("days", $days, [SelectItem("7", "7 days"), SelectItem("30", "30 days")])

```

User picks "30" → `$days` updates → Query re-fetches with `{days: "30"}` → chart updates.

Auto-refresh [#auto-refresh]

Add a fourth argument for periodic refresh (in seconds):

```text

health = Query("get_server_health", {}, {cpu: 0, memory: 0}, 30)

```

This re-fetches every 30 seconds - great for monitoring dashboards.

Writing data with Mutation [#writing-data-with-mutation]

```text

createResult = Mutation("create_ticket", {title: $title, priority: $priority})

```

Mutations are NOT executed on page load. They only run when triggered by `@Run`:

```text

submitBtn = Button("Create", Action([@Run(createResult), @Run(tickets), @Reset($title)]))

```

This button does three things in order:

1. `@Run(createResult)` - executes the mutation (creates the ticket)

2. `@Run(tickets)` - re-fetches the tickets query (refreshes the table)

3. `@Reset($title)` - clears the form

Error handling [#error-handling]

Check `result.status` for mutation feedback:

```text

createResult.status == "error" ? Callout("error", "Failed", createResult.error) : null

createResult.status == "success" ? Callout("success", "Created", "Ticket added.") : null

```

How tools connect to the runtime [#how-tools-connect-to-the-runtime]

The Renderer accepts a `toolProvider` prop that handles tool execution. Pass a function map or an [MCP](https://modelcontextprotocol.io/docs/getting-started/intro) client:

```tsx

// Function map

When a `$variable` changes:

1. All inputs bound to it update their display

2. All `Query` calls that reference it in their args re-fetch

3. All expressions that reference it re-evaluate

4. The UI re-renders with new values

No event listeners. No useEffect. No wiring. Just declare and reference.

# The Renderer

`

When a `$variable` changes:

1. All inputs bound to it update their display

2. All `Query` calls that reference it in their args re-fetch

3. All expressions that reference it re-evaluate

4. The UI re-renders with new values

No event listeners. No useEffect. No wiring. Just declare and reference.

# The Renderer

`